|

Final year PhD candidate in the Visual Geometry Group (VGG), Oxford. Co-advised by Andrew Zisserman, Andrea Vedaldi, João Henriques and Iro Laina. Funded by the EPSRC+AWS fellowship with AIMS CDT. Research Interests:

Parallelly, I also work as an AI consultant for an on-device AI startup and a LLM content moderation company. Before, I was a Senior Researcher at Qualcomm AI Research. I have also been fortunate to spend time at Voxel51, IBM Research (Bangalore and Almaden Lab), IFPEN (Paris), TCS Research. Education:

Feel free to setup a call if you want to discuss ideas around startups, AI (CV / ML / LLMs), or want to collaborate. |

|

|

|

|

|

|

|

|

|

|

|

|

Yash Bhalgat*, Vadim Tschernezki*, Iro Laina, João Henriques, Andrea Vedaldi Andrew Zisserman, ACCV, 2024 We propose a 3D-aware method for object tracking in long egocentric videos, leveraging scene geometry to handle rapid motion and occlusions. Our approach improves tracking accuracy, reduces ID switches by up to 80%, and enables applications like 3D object reconstruction and amodal segmentation. |

|

Yash Bhalgat, Iro Laina, João Henriques, Andrew Zisserman, Andrea Vedaldi ECCV, 2024 We present Nested Neural Feature Fields (N2F2), a hierarchical approach to 3D scene understanding that encodes multi-scale properties in a unified feature field. Our method outperforms state-of-the-art approaches like LERF and LangSplat on open-vocabulary 3D tasks, especially for complex queries, while enabling faster inference. |

|

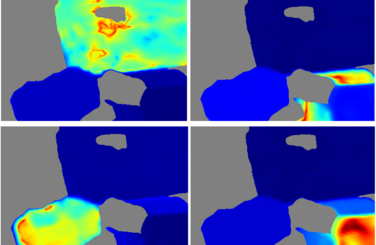

Yash Bhalgat, Iro Laina, João Henriques, Andrew Zisserman, Andrea Vedaldi NeurIPS, 2023 (Spotlight presentation) We present a novel "slow-fast" contrastive fusion method to lift 2D predictions to 3D for scalable instance segmentation, achieving significant improvements without requiring an upper bound on the number of objects in the scene. |

|

|

Yash Bhalgat, João Henriques, Andrew Zisserman CVPR, 2023 An "Epipolar-guided training" method to incorporate multi-view geometric priors into Transformer models, which can be implemented in 150 lines of code. During test-time, the Transformer implicitly estimates the epipolar geometry given 2 images and uses it for downstream predictions, e.g. for pose-invariant retrieval. |

|

|

John Yang, Yash Bhalgat, Simyung Chang, Fatih Porikli, Nojun Kwak WACV, 2022 We propose a tiny deep network of which partial layers are recursively exploited for refining its previous estimations. During its iterative refinements, we employ learned gating criteria to decide whether to exit from the weight-sharing loop, allowing per-sample adaptation in our model. We also predict and exploit uncertainty estimations in the gating mechanism. |

|

|

Yash Bhalgat, Yizhe Zhang, Jamie Lin, Fatih Porikli NeurIPS, 2020 We introduce a neat trick to enable the execution of convolution operations in the form of efficient, scaled, sum-pooling components. We present a Structural Regularization loss that enables this decomposition with negligible performance loss. Our method is competitive with other tensor decomposition and structured pruning methods. |

|

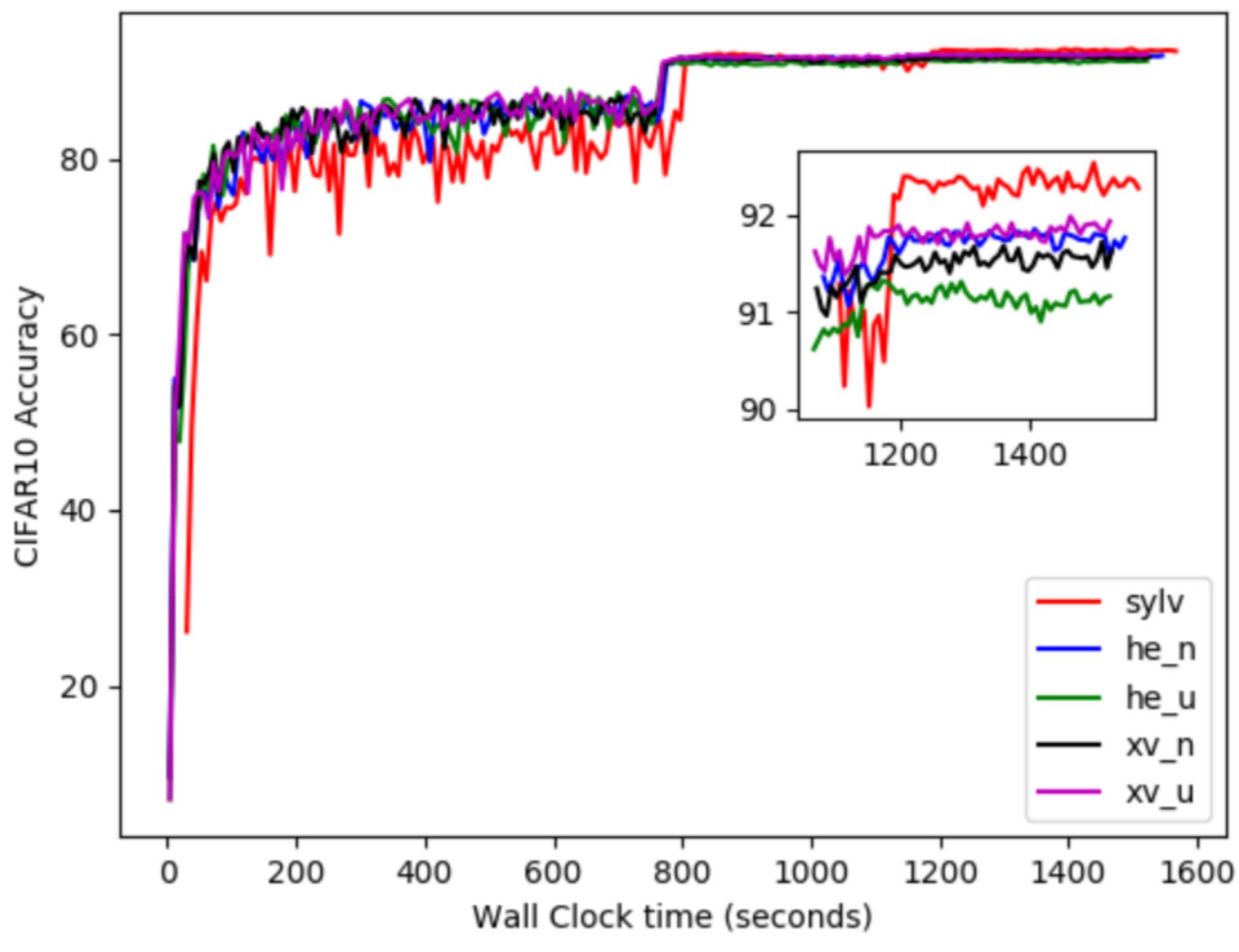

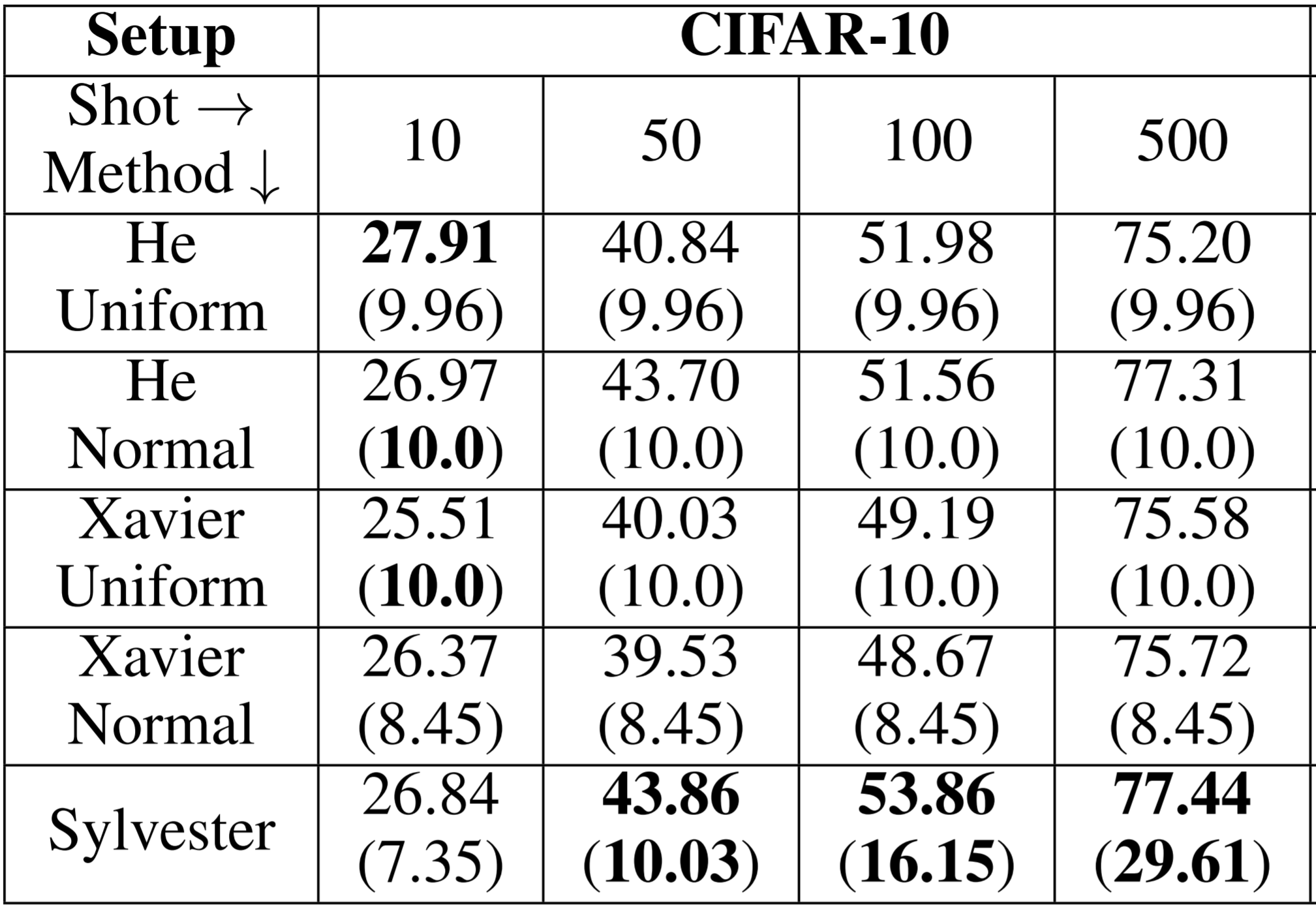

Debasmit Das, Yash Bhalgat, Fatih Porikli Practical Machine Learning for Developing Countries Workshop, ICLR, 2021 We propose a data-driven scheme to initialize the parameters of a neural network. The initialization is cast as an optimization problem, which is restructured into the well-known Sylvester equation that has fast and efficient gradient-free solutions. We show that our proposed method is especially effective in few-shot and fine-tuning settings. |

|

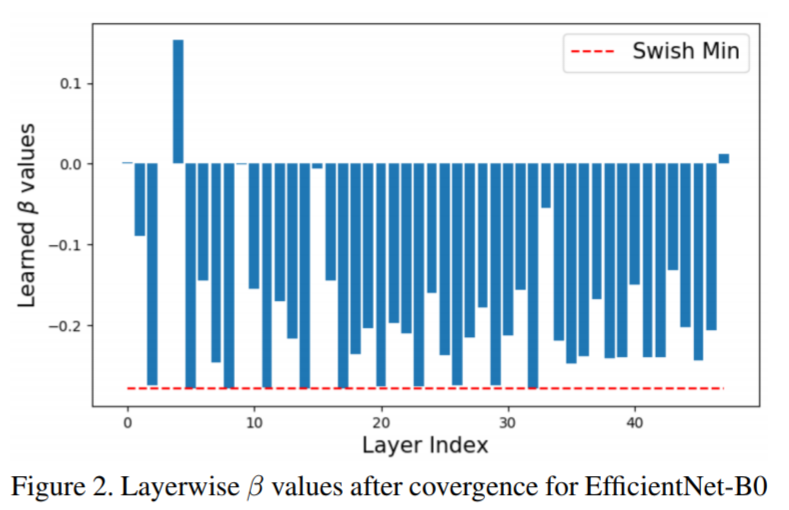

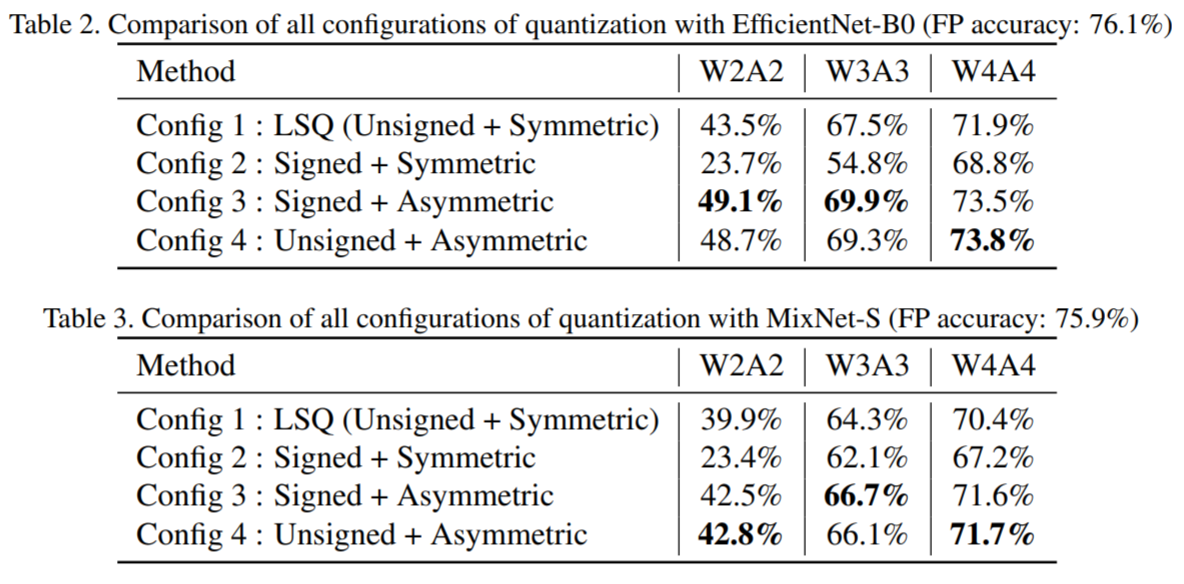

Yash Bhalgat, Jinwon Lee, Markus Nagel, Tijmen Blankevoort, Nojun Kwak Efficient Deep Learning in Computer Vision Workshop, CVPR, 2020 We introduce a general asymmetric quantization scheme with trainable scale and offset parameters. LSQ+ shows SOTA results for EfficientNet and MixNet outperforming LSQ for low-bit quantization. |

|

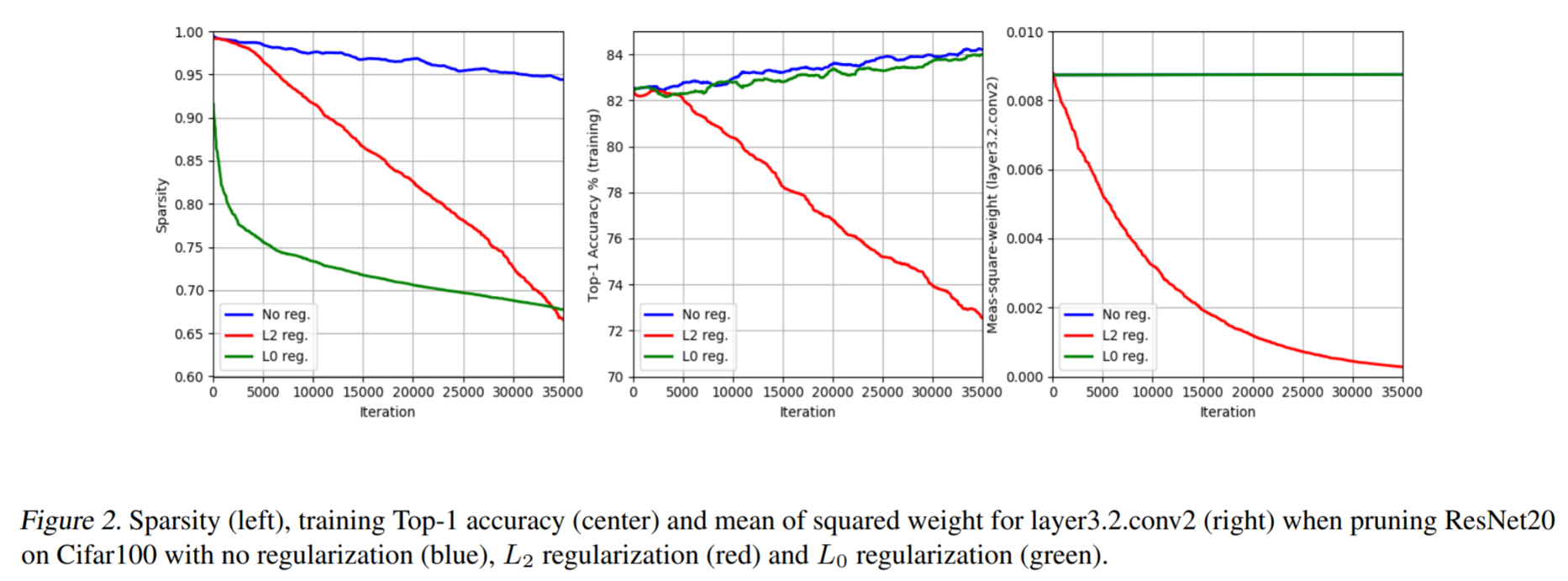

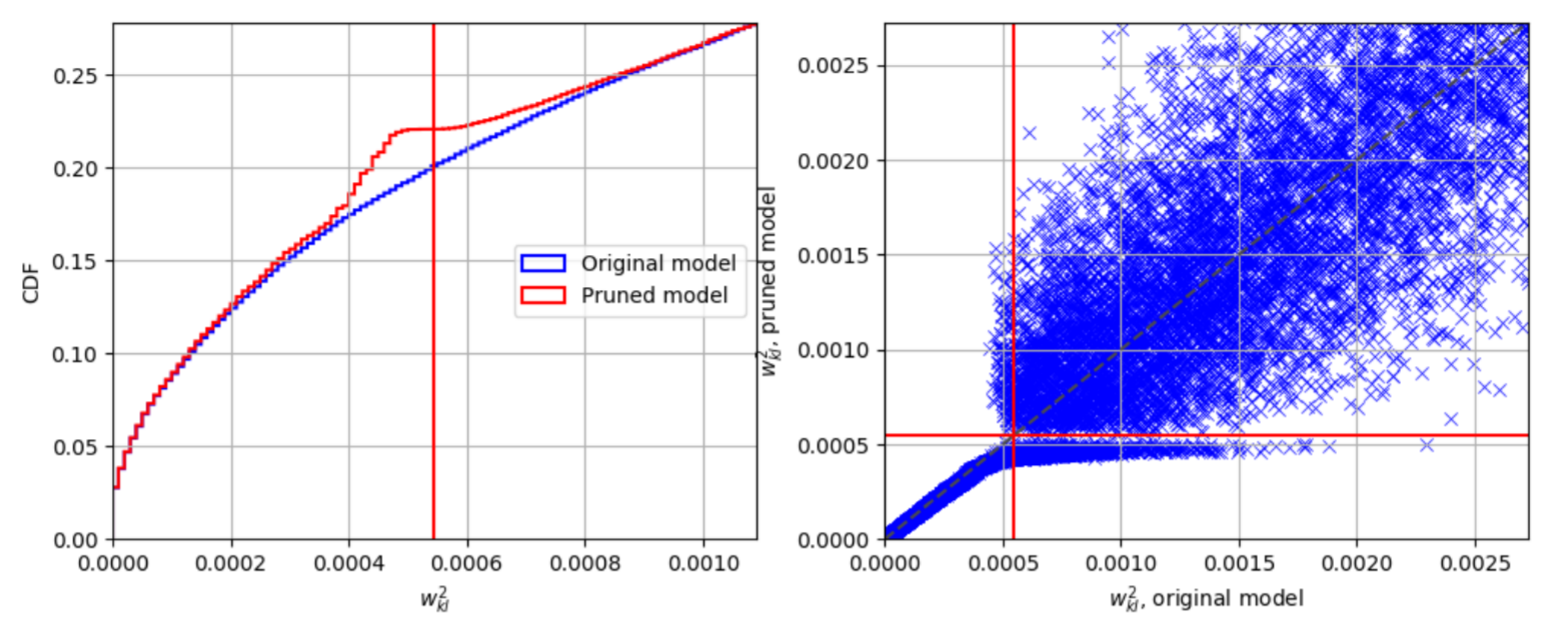

Kambiz Azarian, Yash Bhalgat, Jinwon Lee, Tijmen Blankevoort arxiv, 2020 We propose an end-to-end differentiable method for learning layerwise pruning thresholds which results in SOTA model compression ratios with AlexNet, ResNet and EfficientNet. Our method also generates a trail of checkpoints with different accuracy-efficiency operating points. |

|

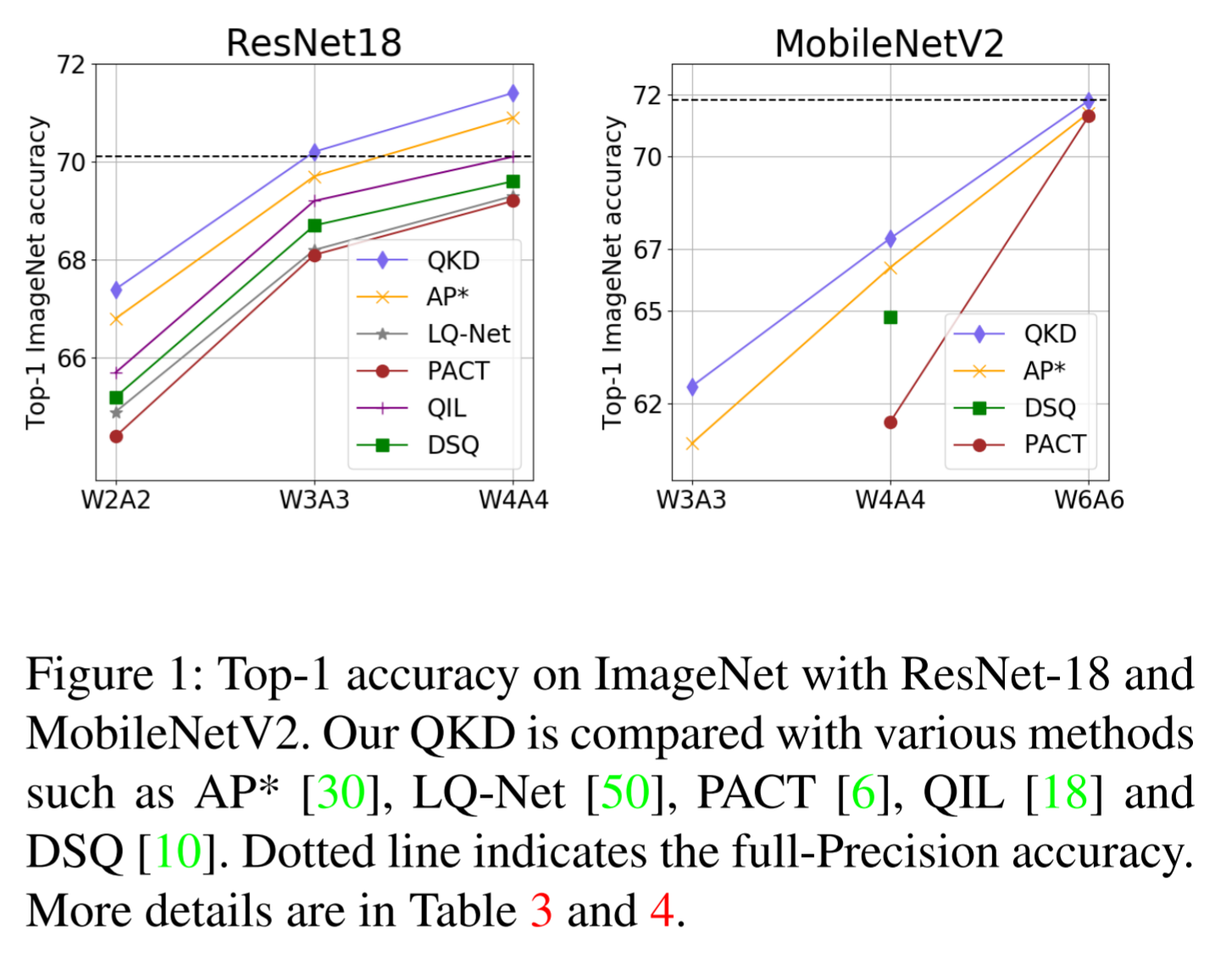

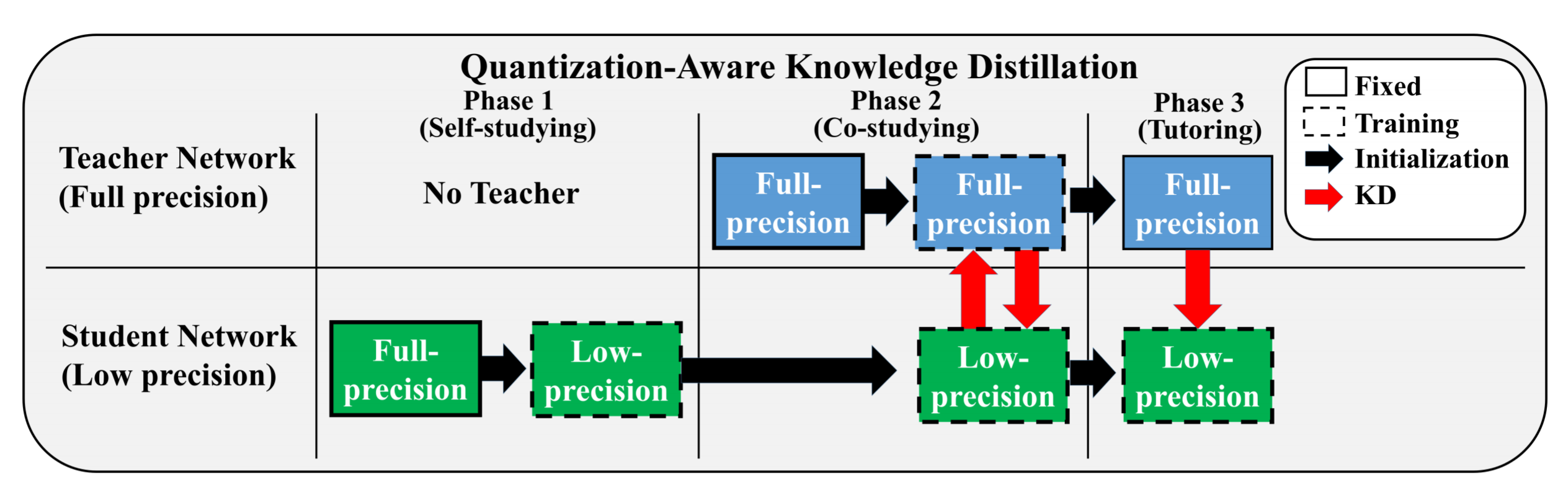

Yash Bhalgat*, Jangho Kim*, Jinwon Lee, Chirag Patel, Nojun Kwak arxiv, 2020 Low-bit quantization and KD often don't go well together, but both are important approaches to reduces a model's memory footprint. We propose an effective method to combine these two methods and show results that outperform all existing quantization/KD approaches. |

|

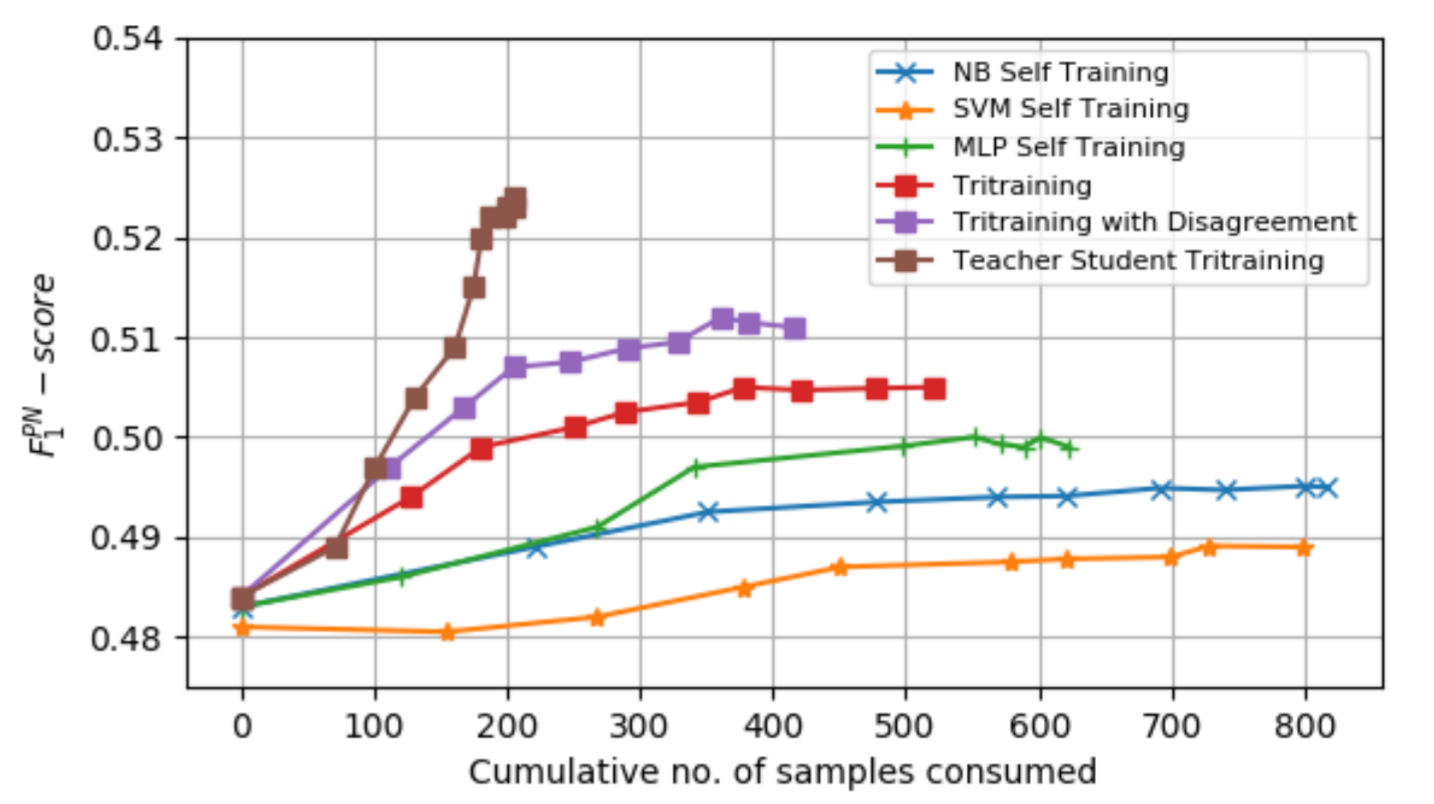

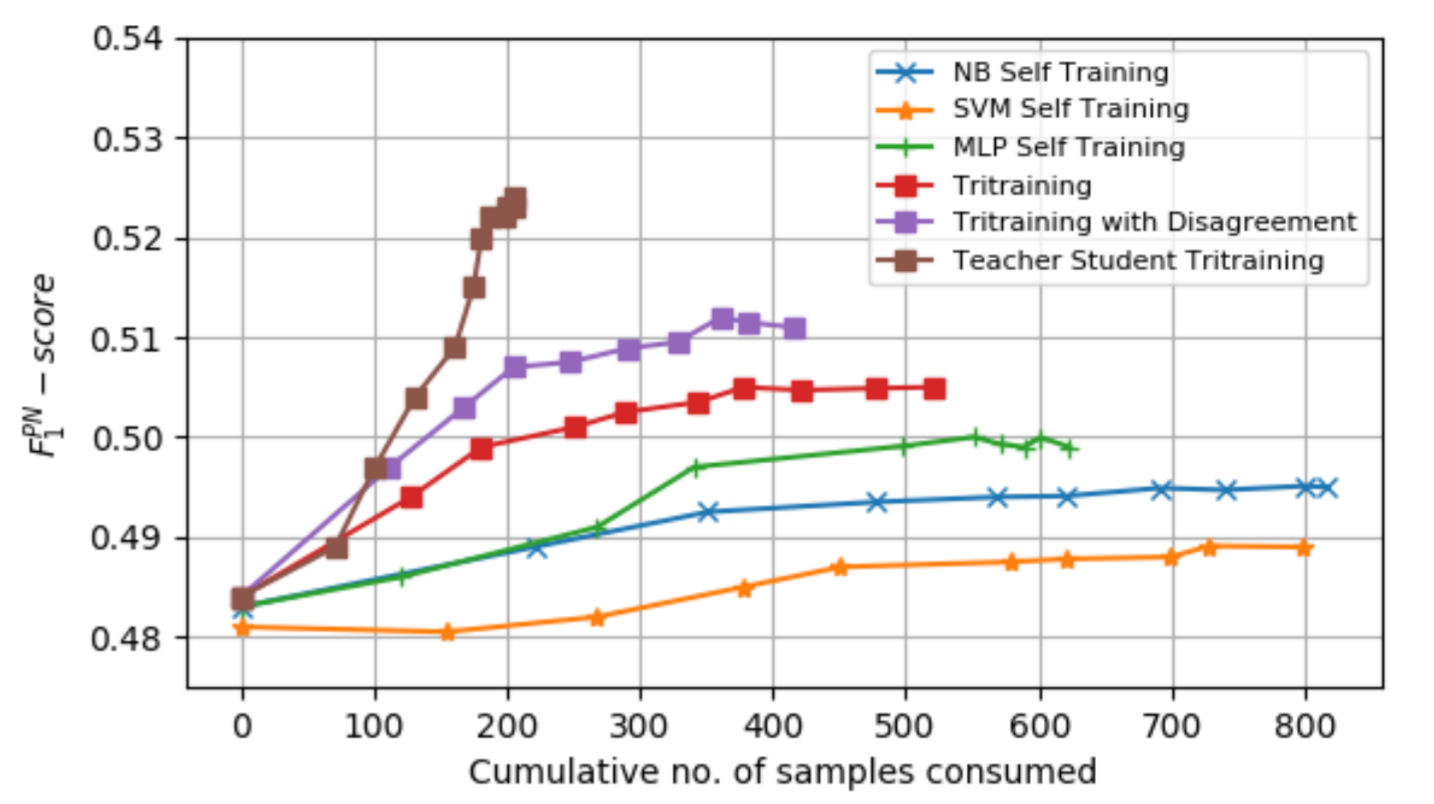

Yash Bhalgat, Zhe Liu, Pritam Gundecha, Jalal Mahmud, Amita Misra KONVENS, 2019 Teacher-student tri-training is a method for semi-supervised learning using 3 classifiers working using adaptive teacher and student thresholds. |

|

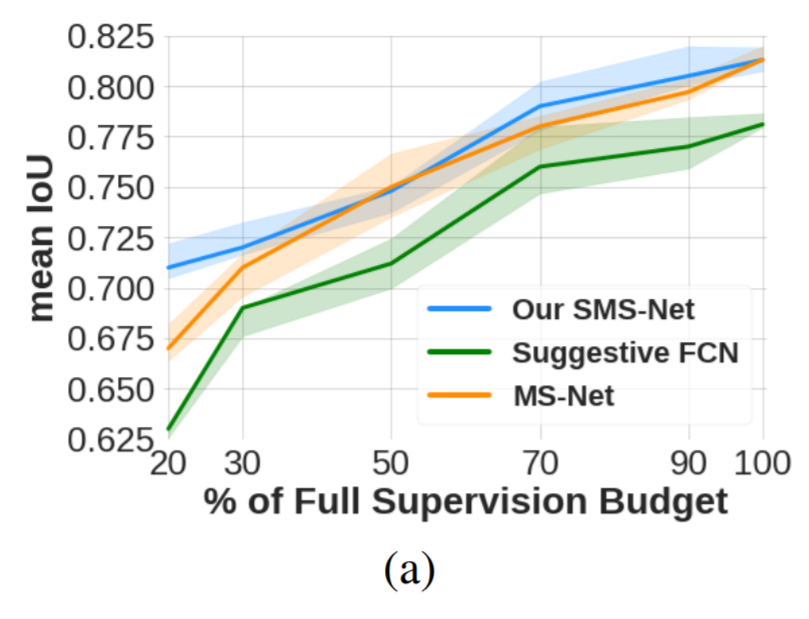

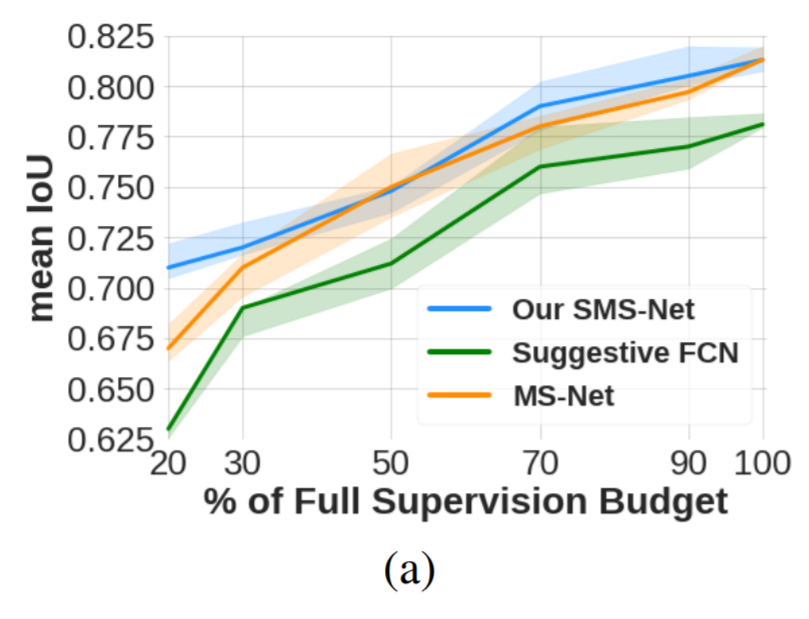

Yash Bhalgat*, Meet Shah* Suyash Awate Medical Imaging meets NeurIPS workshop, 2019 For Medical Image segmentation, we present a budget-based cost-minimization framework in a mixed-supervision setting via dense segmentations, bounding boxes, and landmarks. |

|

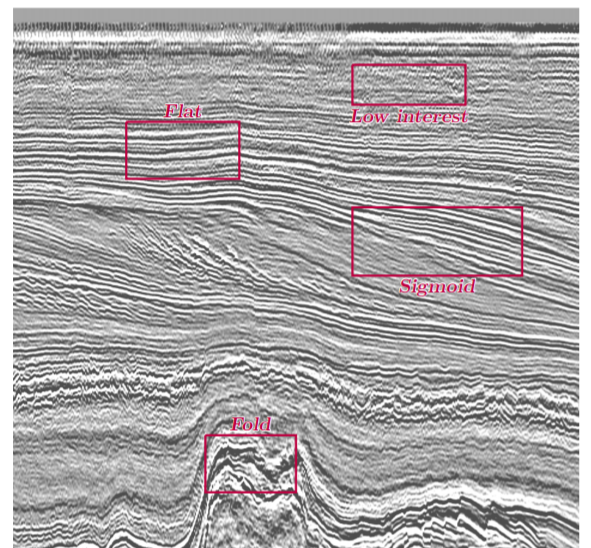

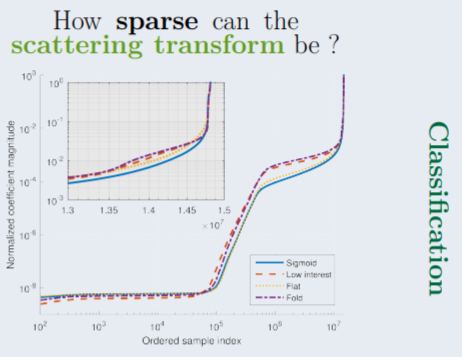

Yash Bhalgat, Jean Charlety, Laurent Duval ICASSP, 2018 We use Scattering Wavelets transforms to extract sparse feature sets from seismic data. We show that using this method combined with simple PCA-based feature selection leads to promising classification performance in affordable computation time. |

|

|

|

|

|

I play the Tabla, an Indian percussion instrument and have a Visharad (≈ Bachelor of Music) in Indian Classical music. I've also briefly tried to learn the Piano and Harmonica. I am a natural beatboxer (check this out) and I sometimes post music videos here: |

|

|

|

|

Website template borrowed from here. |